Application savings are locked behind infrastructure credibility

The largest cloud cost savings live in application code owned by other teams. Reaching them requires trust built through infrastructure wins.

The largest cost savings live in application code owned by other teams.

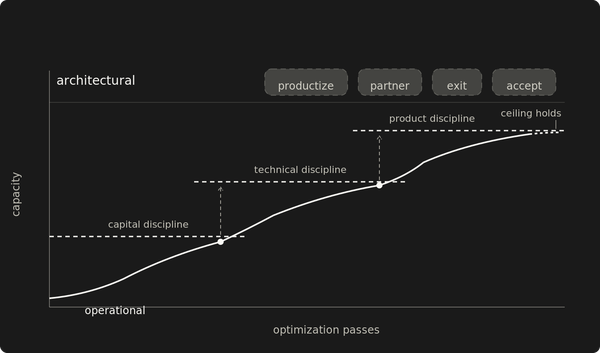

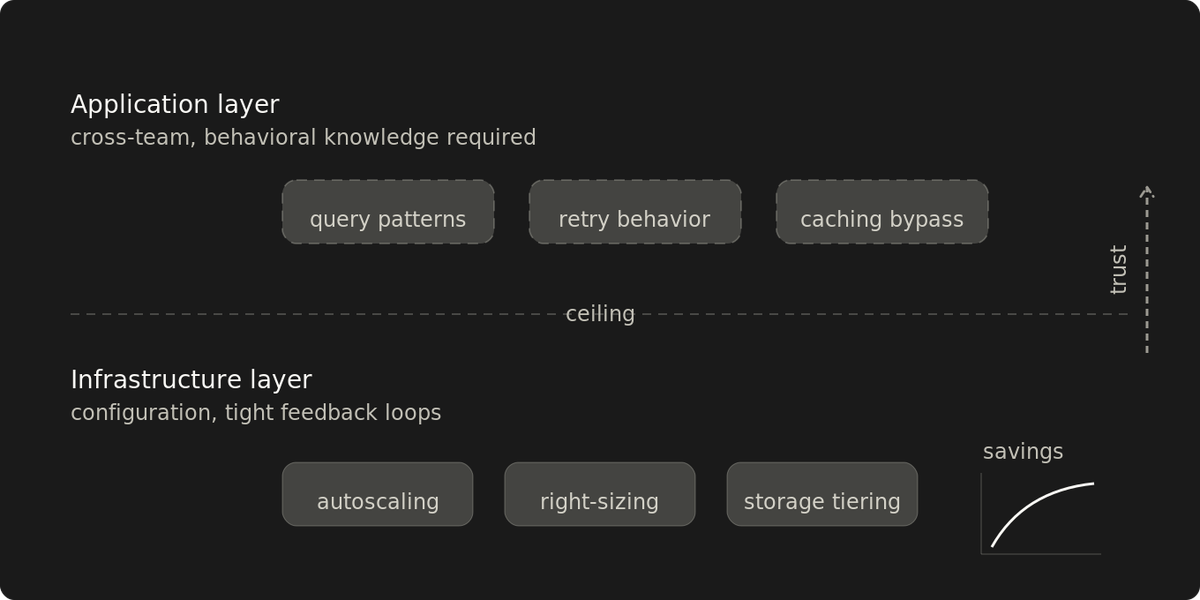

Infrastructure optimization handles the configuration layer: autoscaling, resource allocation, instance scheduling, storage tiering, orphan cleanup. Results land within a billing cycle. But the configuration layer has diminishing returns, and the remaining cost drivers are application-owned: services, queries, caching patterns, code that belongs to other teams.

The cheapest savings land first. The biggest ones come last, because they depend on the knowledge and credibility that infrastructure work builds.

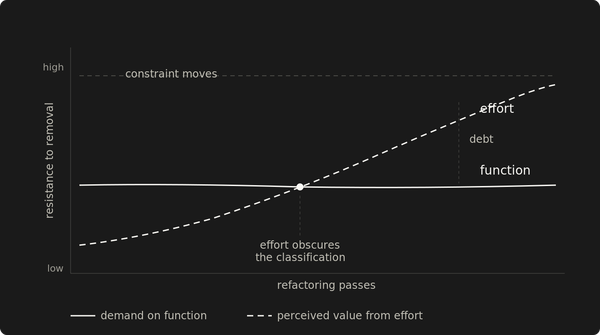

The configuration layer has a ceiling

The signal is in the type of remaining opportunities. When every change yields single-digit improvements and the remaining cost drivers are all application behavior, the configuration surface is exhausted. Traffic growth can mask this. A flat bill doesn't mean the limit is reached if new workloads absorb the gains. The diagnostic is the opportunity set, not the bill trajectory.

Below that ceiling, infrastructure work generates more than cost reduction. Tuning autoscaling against real traffic surfaces which services consume disproportionate resources, where traffic concentrates, and which scaling patterns indicate application-level waste. Cost attribution tools show what's expensive and when.

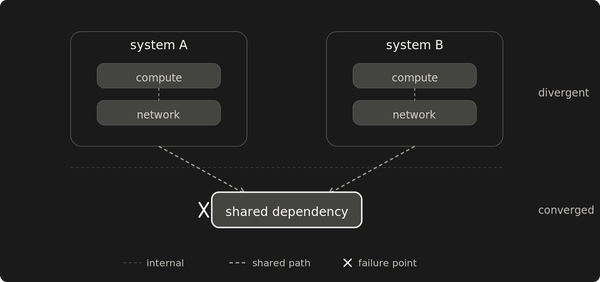

They miss the behavioral root cause: the interaction pattern between services that explains why a cost spike correlates with a batch window, or why a database tier stays provisioned for a peak that only one service's retry logic creates.

Application-level proposals depend on behavioral knowledge

A resource that looks oversized from a dashboard might be correctly provisioned for a workload pattern that only surfaces during specific windows. Retry behavior in a downstream service can inflate an allocation that looks wasteful from the outside.

Infrastructure tuning generates this knowledge as a side effect. After a few billing cycles of right-sizing and investigating anomalies, cost spikes become traceable to specific interaction patterns. The work builds a behavioral model no dashboard provides, because the model needs context about why resources are provisioned the way they are. That context surfaces when you try to change them.

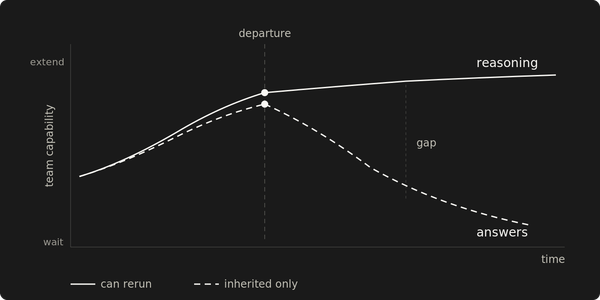

Demonstrated results change how teams receive proposals

The team that owns the application code needs to see that the analysis is accurate and the proposed tradeoff holds under production conditions.

Mandated changes without that track record produce compliance. The owning team implements the minimum change that satisfies the requirement. Mandates backed by demonstrated platform knowledge get deeper engagement: the team tests the tradeoff against their own understanding, surfaces constraints the analysis missed, proposes alternatives that achieve the same savings with less risk. The team has seen the platform team's previous work land in the cost reports.

A cost reduction that shows up in the monthly report changes the conversation. The platform team's track record is already in the budget data when it asks teams to change their code.

Proposals without empirical backing face reasonable resistance

Analysis built from cost attribution alone references behavior the analyst hasn't observed firsthand. When the owning team asks questions that attribution data can't answer — why the allocation is that size, what happens during the batch window, whether the proposed change affects a downstream dependency — the proposal stalls.

When the analysis misses something, the correction comes from the owning team. Building from correction is slower than building from demonstrated depth.

Meanwhile, infrastructure savings that require less cross-team coordination sit untouched. The initiative spent its early credibility budget on a cold proposal. Pressure for visible progress goes unanswered.

The sequencing produces its prerequisites

Infrastructure work requires less coordination and produces both behavioral knowledge and visible results. Application-level work depends on both.

Infrastructure changes land within a billing cycle. That matters when leadership wants progress on the cost line this quarter. The biggest savings take longer because they depend on knowledge and credibility that only accumulate through the smaller ones.

The ceiling is visible when each change yields marginal improvements and the top cost drivers are all application-owned. Application-level work is ready when the platform team describes a service's cost behavior and the owning team recognizes it as accurate, and starts asking what to change. Architectural work starts when teams bring cost questions unprompted.

Early infrastructure wins are the access mechanism for everything above the ceiling.