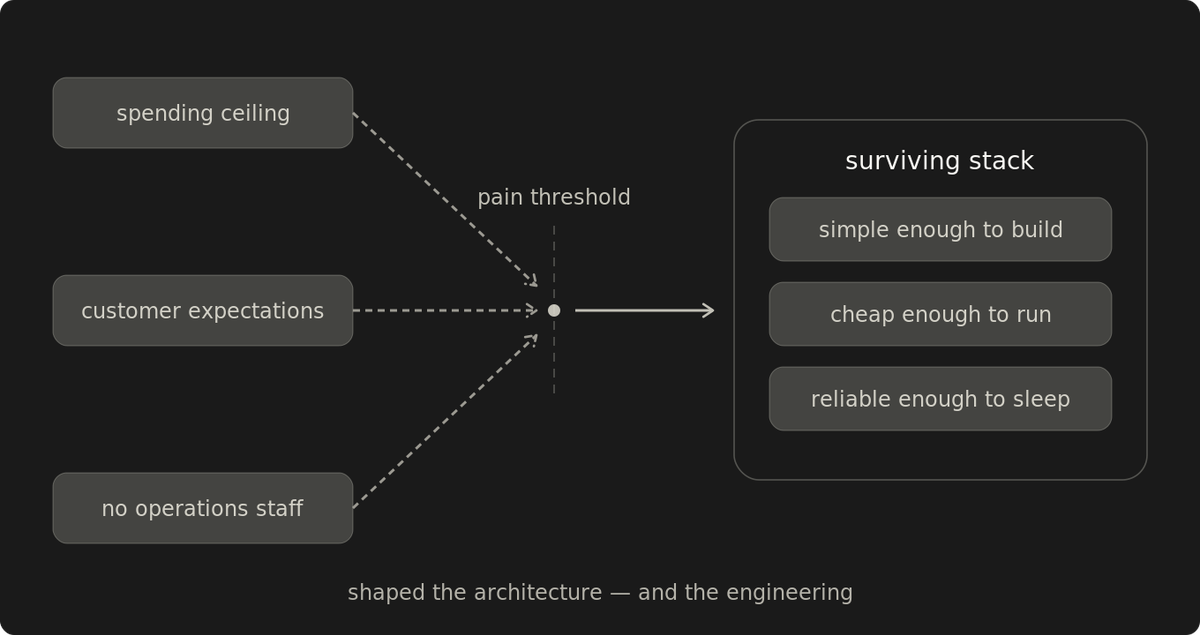

Budget constraints are architecture inputs

A spending ceiling changes which tools get selected, where capabilities live, and what never enters the stack.

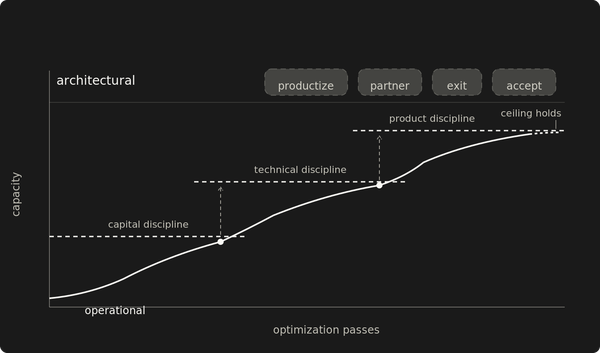

A budget is an architectural boundary condition. It decides which capabilities exist, where they live, and which risks remain unfunded. Under real uptime pressure, that boundary produces engineering discipline. Without uptime pressure, the same budget just produces cheaper systems.

Under a hard budget, every decision runs through a filter: does this solve a problem costing money or sleep right now? What survives is right-sized to actual operational pain, not to speculative complexity that accumulates when the filter is optional.

Fifteen production systems ran on this filter for eleven years. No dedicated operations staff. DevOps tooling (source control hosting and CI runners) never exceeded $60/month. Hosting costs scaled separately per customer.

Spending ceilings change which tools get selected

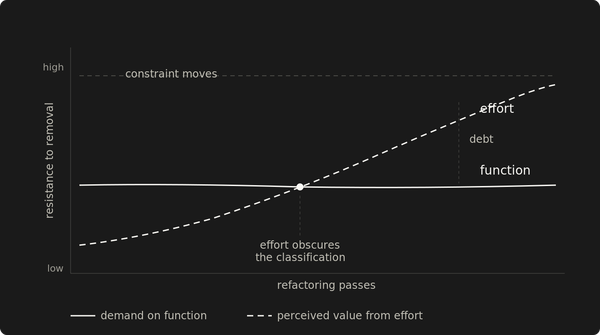

When budget pressure is weak, teams approve tooling for possible future needs, and the stack expands faster than actual operational pain justifies. The CI platform gets approved for workflows that might eventually be needed. The monitoring stack expands for comprehensiveness. The orchestration layer arrives because the architecture might grow into it. Every tool made sense when it was approved. Six months later you're maintaining nine components for three actual problems, and each one carries a maintenance cost the budget didn't account for.

Under a spending ceiling, instrumentation has to justify itself against actual failure cost. A tool enters the stack when a specific problem crosses the threshold where the fix is cheaper than the pain. Almost nothing enters on speculation.

Builds started producing different artifacts on different developers' laptops. Same source, different output depending on Java version and library configuration. Maven fixed that. Standardized builds, reproducible output, zero additional infrastructure cost.

Manual deployments at midnight were costing sleep and producing errors on revenue-critical systems. Jenkins removed that labor and the operator error that came with it.

The source control platform changed its pricing. Once admin and maintenance cost were counted, self-hosting became uneconomical. GitLab's free license included unlimited users, which gave customers direct visibility into build and deployment flow without adding a seat cost.

Customer expectations are the harder constraint

Budget was not the quality floor. Customer expectations were.

Operational systems (anything where downtime stops someone's work) create an implicit SLA regardless of what's written in a contract. Customers don't track your infrastructure costs. They track whether the system works when they need it. The gap between what customers expect and what the budget allows adds a second decision rule: does this solve the customer's actual problem, or a problem the customer doesn't have?

Monitoring targets page load times and availability, the metrics that generate phone calls. Past a certain point, observability starts paying to debug complexity that the architecture should have rejected earlier.

Across fifteen production systems, the gap held for over a decade. Most ran on controlled infrastructure, fully self-funded. Infrastructure monitoring used free uptime and response-time tools. Application monitoring was a log parser that sent emails. Logging levels determined alarm severity, and an error-level log triggered a page. Often the monitoring caught the failure before a customer contacted support, and the fix was already underway.

That constraint shaped the code itself. If the monitoring was simple, the code had to be ruthless about error handling. Every logged error had to mean something actionable.

Capabilities land in the cheapest layer that holds them

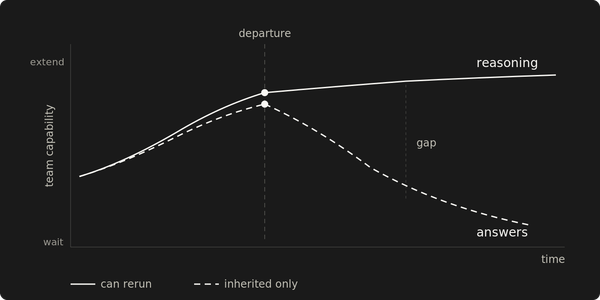

Not having a dedicated operations role didn't eliminate ops. It concentrated architectural responsibility. The same people writing application code built every operational capability, maintained it, and got paged when it broke. When the builders carry the operational consequences, architecture gets simpler and sharper.

These mechanisms fit the workload: session-based Java web applications on single servers, deployed during off hours. A different workload shape would have produced different mechanisms. The constraint logic is the same.

Zero-downtime deployment came from the cheapest mechanism available: Tomcat's parallel deployment on a single server with versioned WAR files, where requests carrying a session from the old version continue routing to it while new sessions hit the new.

The mechanism that made this safe was a database compatibility contract built for the purpose: additive schema changes only, no drops or renames until the old version fully retired. The contract did the heavy lifting. Additive-only schema changes and backward-compatible data formats meant no deployment could break a version still serving requests. Tomcat matched sessions to versioned contexts via the JSESSIONID cookie. Zero additional infrastructure cost.

Automated recovery came from the same pressure. A watchdog script started Tomcat if it stopped running, restarted it if the application stopped responding, and restarted it if memory usage crossed a threshold. Every restart triggered a page. The system stayed up with minimal disruption, and we could investigate the root cause before customers noticed. The chain held for that operating model, on single servers with no load balancers or redundant instances.

The pain-driven filter is reactive

Pain-driven filters evaluate what has already hurt. They can't address risks that haven't materialized. Every system running under budget constraints carries gaps the filter hasn't been forced to evaluate. The failure that would expose them hasn't arrived yet.

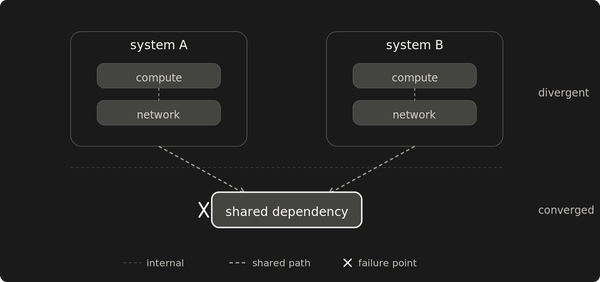

The data center had redundant power, multiple upstream carriers, and 24/7 operations staff. Road construction severed a fiber line on a nearby highway, and the facility's redundant fiber feeds weren't live yet. Six years of continuous operation, isolated in an afternoon. Daily backups, cold spares, tested recovery procedures — all in the same building as production. Inaccessible.

The constraint logic had evaluated geographic redundancy and rejected it. A second dedicated server at a different provider would have doubled the infrastructure bill for a cold spare that might never activate. The datacenter's own redundancy specs made that tradeoff defensible. Customers expected the systems to work, but geographic redundancy had never failed, so the expectation never pushed the architecture past what the budget could justify.

The fiber cut moved geographic redundancy from a risk that hadn't materialized into one that had. The immediate response went through the same filter: offsite backups to a second provider's object storage. Daily snapshots, recoverable to any provider. The cheapest fix that addressed the newly materialized risk.

The cloud migration came later, when it made financial and operational sense on its own terms. DigitalOcean's $5/month base price made the economics work. Each customer system moved to its own right-sized cloud instance at $12-$24/month, replacing a monolithic bare metal bill. The migration also resolved the geographic redundancy gap the fiber cut had put on the radar — each system now ran in its own region with offsite backups to a separate provider.

RTO dropped from 24 hours to 30 minutes per system. RPO stayed at 24 hours: daily offsite backups, because continuous replication would have consumed the destination instance's resources and eaten the margin. Cold multi-cloud DR at $10/month total. Thirty-minute recovery without turning low-margin hosting into a loss leader.

Even after cloud pricing made geographic redundancy affordable, the filter still applied: cold redundancy, because hot replication would eat the margin.

Constraints compound into margin discipline

Budget, headcount, and customer expectations each shaped the architecture independently. Together they forced an engineering discipline that none of them would have produced alone.

The spending ceiling kept the stack small. No operations role meant the people who built the systems carried the consequences of every shortcut. Customer expectations set the quality floor. Under all three, every capability had to be simple enough to build, cheap enough to run, and reliable enough that the person who shipped it could sleep.

In constrained environments, teams often overinvest in visibility for edge-case complexity that produces little real-world loss, and under-invest in the controls that keep the system commercially and operationally viable.

Isolate the real failure mode. Place capability at the cheapest layer that holds it. Speculative complexity doesn't pass the filter.

Under hard constraints, every architectural decision is a margin decision under uptime pressure.