Cultural transformation has an error budget

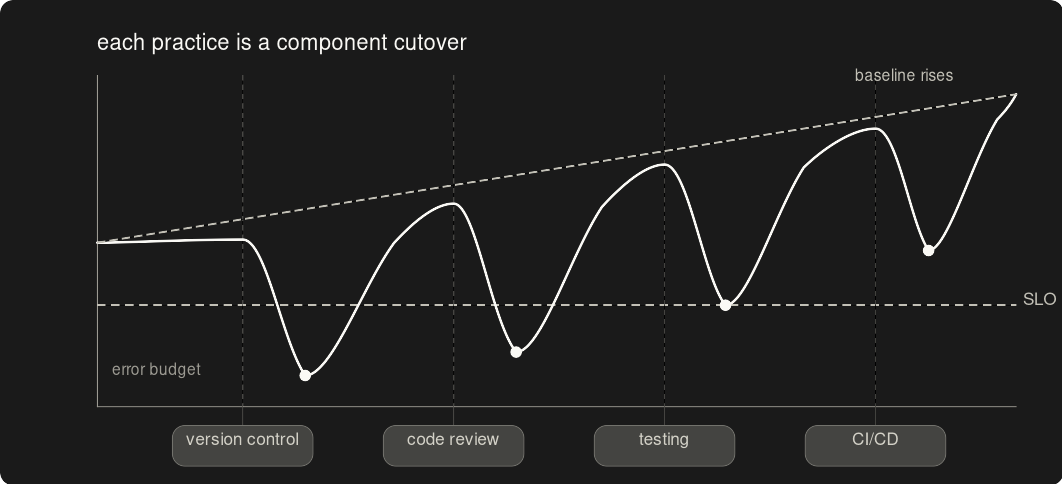

Each practice is a component cutover. The team's own metrics are the SLIs. Regressions consume error budget. Culture shifts as a downstream consequence.

Operations teams already have the discipline. System migration mechanics move it into code.

They troubleshoot infrastructure methodically, write up findings, build procedures so the same failure doesn't repeat. That rigor rarely reaches their own scripts and configurations. Production changes happen through direct server edits because the path works and nobody framed coding practices as the same systematic work.

Each practice introduced is a component cutover. The team's operational metrics are the SLIs. The SLO is the acceptable range for each one. Each regression consumes error budget — when the budget is spent, the practice gets pulled back, adjusted, reintroduced. The cultural shift follows from applying existing discipline through better tooling.

Call it what they already do

Framing new practices as training implies the team has been doing something wrong. Professionals who keep critical systems running often resist that framing, even when they'd accept the practice itself.

The same practices land as extensions of existing discipline. Version control is change tracking for code. Code review catches problems before deployment, using the diagnostic instincts the team already applies to infrastructure. Testing extends upstream: find the defect before it ships, with skills the team already has.

Metrics control the pace

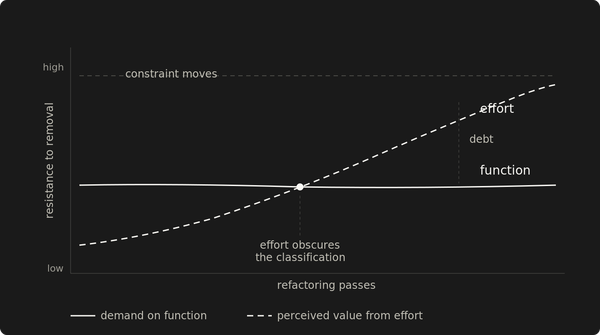

A system migration that degrades performance beyond the SLO triggers a rollback. Cultural transformation needs the same mechanism.

The SLIs come from metrics the team already owns and can explain: deployment frequency, incident resolution time, manual effort per change. The SLO for each sets the floor. A practice that doubles manual effort has consumed its error budget. A practice that slows deployments 10% for two weeks might be spending budget on adjustment — recoverable if the signal bounces back.

The signals move on their own. The interpretation requires judgment. Rejection looks like a dip that correlates with a specific practice, shows up across the team, and draws complaints about the practice itself. Adjustment is different: the dip coincides with an unrelated incident, affects only the people still building the new habit, or fades within a sprint. Check-ins make that distinction. Without them, every regression looks the same.

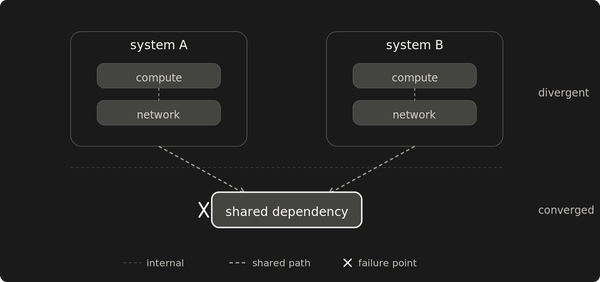

The big-bang cutover fails for culture too

Introduce version control, code reviews, testing, and CI/CD in the same sprint. The team hears one message: everything you've been doing is wrong.

Frustration comes from two directions. One is visible: "That's developer stuff." The objection targets what the practice represents, not what it does. The other is invisible. Under production pressure, hands go to the manual fix. The old response fires before the new one has been practiced enough to compete.

Simultaneous introduction compounds both. Each practice gets a fraction of the repetition it needs. Every subsequent attempt carries the friction of the last one.

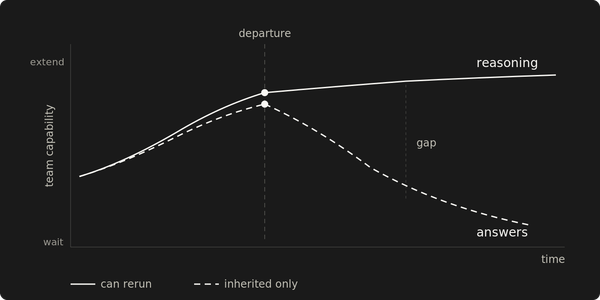

Practices stick when the cost arrives after the payoff

Vocabulary reduces friction at introduction. What makes a practice persist is whether the person gets something concrete before the overhead becomes visible.

Some practices pay fast. Code review catches a production-bound defect in the first week — the person who found it defends the process. Version control's payoff is slower. The daily cost (branching, committing, writing messages) arrives immediately. The return (reverting a bad change, tracing when something broke) may not land for weeks. Front-load the practices whose payoff is fastest. The slower ones arrive with credibility borrowed from the earlier ones.

Resistance to the next practice shrinks when the last one already paid off.

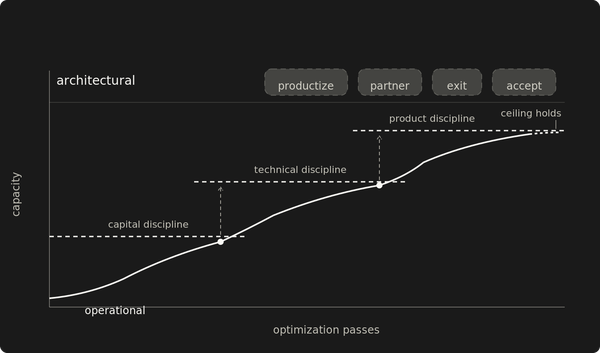

Each practice creates the demand for the next

Version control is the foundation. Deployment pipelines, code review, and testing all need code in a repository. Version control lands fastest when the team can see a path from the repo to the running system, even a manual one. Without that path, version control adds a step without replacing one.

Automated test gating needs a merge process to attach to. The motivation for testing often arrives before the gate does: a defect slips through review into production, and the team feels the cost. By that point, testing feels overdue. The demand emerged from the workflow. The vocabulary framing still helps it land as operational discipline.

Automation formalizes whatever workflow exists. If the workflow is incomplete, the pipeline automates confusion.

Different baselines produce different sequences, but the principle holds: each practice creates the conditions for the next one.

The migration succeeds when nobody calls it one

A production incident or a routine deployment. The team responds through version-controlled changes, tested fixes, automated deployment. The old path doesn't occur to anyone. It stopped being the default somewhere between the second and third practice, and nobody marked the date.

The transformation is complete when the manual edit is the one that would feel unnatural.