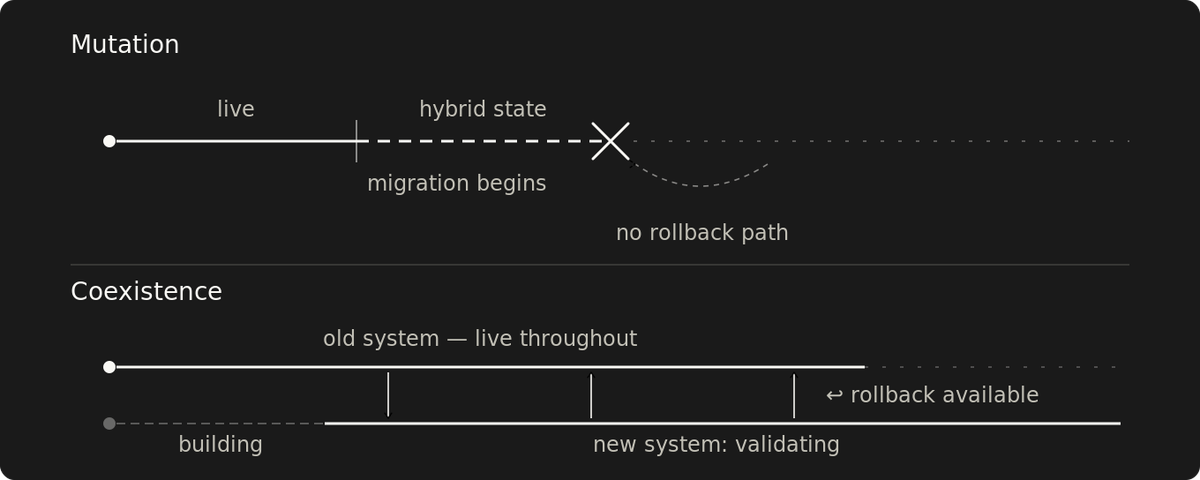

You can't roll back to a system you already changed

Mutation collapses rollback when you need it most. Coexistence keeps rollback available at every step by running old and new side by side.

Rollback is a structural property, not just a runbook.

The plan is simple: if the new system fails, cut traffic back to the old one. But that plan depends on the old system being recoverable. Mutation changes it in place. A failed mutation mid-migration produces a hybrid where the fastest rollback path is gone. Rebuilding the old state from configuration or backups takes longer and carries its own failure modes.

That's the failure scenario: a system stuck between states, with no fast path back.

The old system stays recoverable under coexistence. Build the new one alongside it, shift traffic over, and validate against the old system's baseline. The rollback path degrades as components clear, but it remains available until decommissioning.

The new system runs under real load, measured against that baseline. Divergence within agreed-upon tolerances is expected: differences that don't affect correctness or improvements the migration was designed to produce.

Tolerance is declared before the first traffic shift, tied to correctness or SLO impact, and not renegotiated mid-cutover. Divergence beyond tolerance means traffic switches back. Decommissioning happens when the new system has handled enough traffic patterns within tolerance that fixing forward on the rest is acceptable.

Component health hides system-level failure

Validation against the baseline doesn't mean fire up the new system, run a smoke test, and declare it ready.

The old system has been running in production long enough to show its response times, error rates, latency distributions, and behavior under load spikes. That's the baseline.

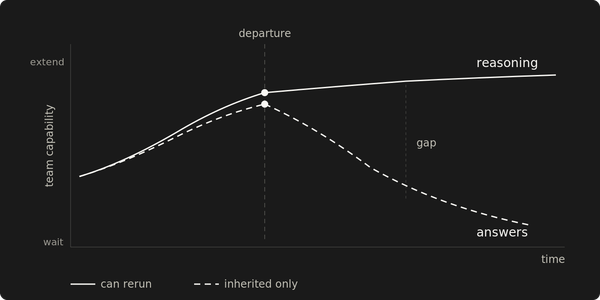

Any non-atomic migration creates a hybrid state. The question is whether each step preserves a rollback path. Failover a component, validate, fail back if it doesn't clear the bar, move to the next one. Each step has a proven return path. Mutation degrades that path with each step. The further you get, the less of the old system exists to return to.

Two validation windows apply, with different risk factors and durations. The short window validates each component: failover, measure against the SLO, and failback if it doesn't clear. The risk factor is whether the failover and failback round-trip fits inside the error budget. Each component's round trip consumes a share of that budget, which forces sequencing decisions. Components that consume too much constrain what's left for the rest.

Once a component clears, stop its old workload but keep the infrastructure intact. The rollback path degrades from that point.

The long window validates system behavior: the full system runs under production load with the old infrastructure available for rollback. The risk factor is behavioral divergence under traffic patterns that haven't yet been observed: off-peak behavior, batch processing, and load spikes. Pattern coverage drives exit criteria for this window.

If the new system doesn't clear the bar at either window, roll back remains an option.

Mutation works when the blast radius is proven

When mutation rollback is cheap (the old state can be rebuilt from configuration, or the change is trivially reversible), coexistence is overhead worth skipping.

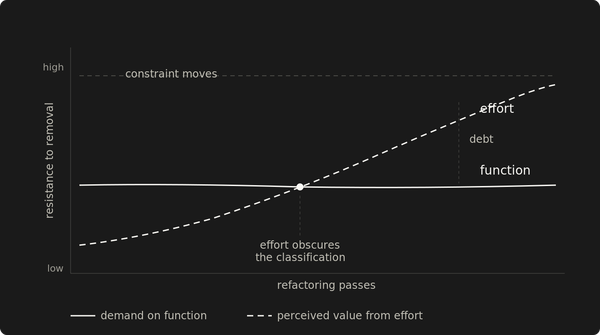

Mutation is a bet on prediction. It works when the failure modes are enumerable before the migration starts, and the recovery path is a procedure the team has already executed. The bet gets worse as the migration grows in scope, duration, or the number of components that change state simultaneously.

Mutation cost is invisible

A failed mutation doesn't land on the infrastructure invoice. It spans incident response, SLA credits, a delayed roadmap, customer churn, and engineering capacity diverted from planned work. Those costs compound, but the line items don't trace back to the migration decision. Incident response shows up as "operational load." The roadmap slip gets attributed to scope, the SLA credit to "reliability incident."

Coexistence cost gets challenged in every budget review because it's a line item with a number attached. The costs of a failed mutation have already been reclassified by the time any budget review happens.

The budget request is for rollback coverage. The old system stays up until the new one proves itself. The duration is an estimate. The exit criteria are operational.

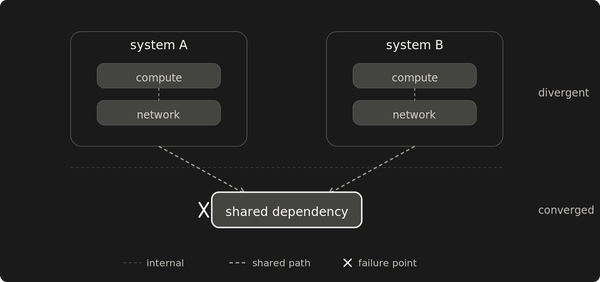

Singleton failover carries a disconnection window

Some components only allow one active instance at a time. The old instance has to stop accepting new work before the new one takes over. Two active instances, even briefly, violates the constraint the singleton exists to enforce. The failover window produces a disconnection. Whether that registers as an outage depends on how the application handles it and whether the duration fits inside the SLO budget.

A stable interface between the component and its consumers enables the cutover. The old instance stops, the new one starts, and consumers don't know because they talk to the interface, not the instance. The interface adds a component that can fail and needs monitoring. That operational cost is the price of hiding the cutover from consumers.

Rollback uses the same interface and the same disconnection window. For stateless components, that makes it symmetric. For stateful components, every write to the new instance since cutover is data the old instance never saw — the forward operation doesn't carry that burden, but rollback does.

After cutover, rollback exposure grows with every write to the new component. When the system manages write divergence, this is bounded. When it doesn't, exposure compounds. Both the failover and failback durations must fit within the SLO budget. When they don't, the migration has surfaced a resilience gap that must be addressed.

Compartmentalization contains the failure when the surrounding system tolerates the failover window. It talks to the interface, not the component, and doesn't see the migration. The component fails back independently. The blast radius stays inside the compartment.

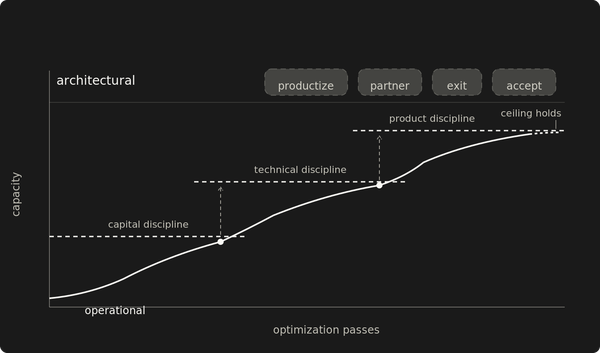

Calendar time is a bad proxy for decommissioning

Decommissioning the old system tests the discipline. The new system looks good, the old one consumes resources, and pressure to decommission grows daily. Infrastructure bills are visible. Operational burden is real. It feels done.

Every traffic pattern the new system handles reduces the risk of decommissioning. A full weekly cycle covers day-of-week variation. Month-end processing exercises the batch paths. Load spikes fill in the rest on their own schedule. Each pattern checked off shrinks the set of unknowns that could force a rollback.

Some patterns can't be enumerated in advance: annual regulatory loads, partner integration batches, and failure modes that only appear during regional outages. The observation window catches some. The rest are a judgment call: how much residual risk justifies the ongoing infrastructure cost. No migration reaches zero unknowns. The enumerable patterns get mechanical exit criteria. The residual risk gets a pre-agreed threshold.

Criteria agreed before the first traffic shift hold when pressure mounts. The SLO is the bar for enumerable patterns. Without that agreement, the decommissioning decision drifts from pattern coverage to "has anyone complained lately" — and the pressure starts the moment the new system handles a clean week.

Coexistence costs more and takes longer. That's the price of a rollback path that works when you need it. Every shortcut that removes the old system early is a bet that nothing left will go wrong. The discipline is not making that bet until the evidence justifies it.